Artificial, no intelligence

AI is remaking the way we live. How worried should we be?

A few weeks ago, my friend Joe Neuhaus wrote a couple of pieces in this space about his boyhood in California. His take was charming, an idiosyncratic view of the world filtered through a child’s imagination. And it was hard to illustrate.

Because Joe works in the field of data technology, we decided he might generate some images with the help of AI. I hesitated. I’ve used AI a bit, mainly for proof-reading and fact checking, and although it works well in these areas, I felt uneasy about extending its influence over my writing. We concluded that the AI generated images, as long as they were identified as such, were acceptable to use.

The experience got me thinking about how quickly and thoroughly this new technology has overtaken the way society works. It was only a couple of years ago, at dinner party at Joe’s that he told his guests about this new thing, ChatGPT. Giving it prompts, we made up nonsense poetry and laughed at what it produced. It was a novelty, a party game. Now, like the advent of the Internet, maybe more so, AI is everywhere, creating a total re-ordering of our ways and means.

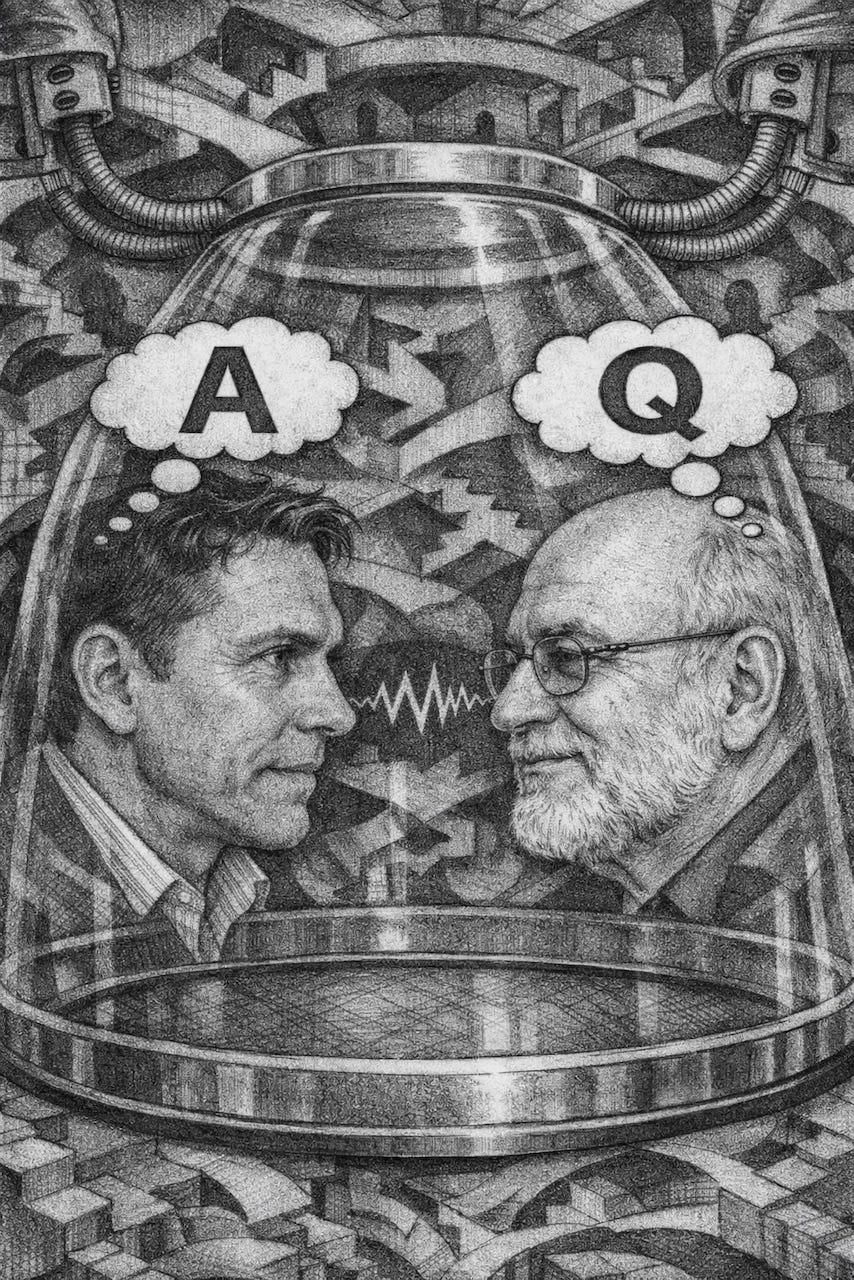

Because Joe is closer to the revolution than most of us, I turned to him with a few questions about where we’re at and where we are going. We don’t get into its economics of job loss and such as it is best left to people more versed in that area than Joe and me. But maybe we can synthesize a bit of understanding out of the swirling storm that has enveloped us all.

BD: What is AI good for?

Joe: First, I believe it’s important to understand “AI” is an umbrella term – there are different kinds of AI systems. The most valuable AI systems, in my opinion, are not Large Language Models (LLMs), which we all know as ChatGPT and systems like it that generate text in response to a prompt. Other Deep Learning Models (DLMs) deal with non-textual data, such as temperature measurements, sound waves, or electrical impulses. Therefore, I’ll answer in two parts, first non-textual DLMs, then LLMs.

Where non-textual DLMs excel: DLMs and can evaluate, for example, radiographic images (X-rays) to determine if you have cancer with accuracy equal to or exceeding humans. Obviously, such AI systems are valuable to the healthcare industry.

DLMs are being used to analyze virtually all large-scale manufacturing processes to make them more energy efficient, which can have a profound impact on climate change.

In general, non-textual DLMs are good at learning to predict how very complex real-world systems behave. However, to do that effectively they must have a lot of training data, which leads us to what DLMs are bad at.

Sometimes simply collecting enough data to make a DLM system reliable costs too much, is impractical, or actually impossible (we don’t have enough data to train a DLM to predict the next California earthquake.)

DLMs are trained by repeatedly adjusting their internal parameters to minimize errors on the training dataset. If trained too long, or on too little data, it starts learning random noise in the data, coincidences specific to the training data, and patterns that don’t actually generalize to the real world.

Where LLMs excel: LLMs can read and summarize hundreds of pages of documents in seconds. From correcting grammar to improving tone and clarity, LLMs are useful for non-native speakers and professionals alike. LLMs are remarkably good at explaining complex topics in plain language – adapting the level of detail to the audience.

BD: What about the downside of LLMs? Economics writer Noah Smith, who has a big following on Substack, says: “As long as you or I or anyone we know has been alive — for all of recorded history, and in fact for much longer than that — humankind has been the most intelligent thing on this planet. At some point in the next couple of years, that will no longer be true. It arguably is no longer true right now.”

Joe: I wouldn’t disagree with this statement if it were made in the distant future. But I take exception to the claim that it will be “in the next couple of years,” especially with respect to LLMs. LLMs are not intelligent – they are simply very good at prediction using correlations, patterns and statistics. But I understand why people think LLMs are intelligent, and I believe it’s due to a “language bug” in how we communicate and process language; let me explain with an analogy.

Imagine studying a painting by reading expert descriptions of it rather than ever seeing it. The result is that you could talk, and even write, about the painting with impressive fluency without having seen it. So, using language only, you could convince others that you have knowledge of the painting. But do you really? I’d argue that you are mimicking other people’s knowledge as expressed through language, and therein lies the “bug.” Language is ambiguous, oversimplifies, contains human biases, and is one step removed from the real world. Similarly, LLMs have been trained on the result of humans processing the world around them for hundreds of years, as expressed through language. That’s not intelligence, that’s mimicry – they’ve never seen the painting.

BD: What about the apocalyptic scenario: Instead of our running AI, it runs us, takes over the world, makes us techno-serfs? Are you suggesting that since AI doesn’t have sentience, it can’t happen?

Joe: I do believe there’s an apocalyptic scenario with respect to AI, and that’s the idea that humans, for reasons of dominance, profit, or manipulation, will unknowingly or knowingly misuse AI in ways that cause great harm to society. That’s already starting to happen, and it’s not the fault of the AI – it’s humans using AI as a “force multiplier” to achieve their own self-interested goals.

BD: What’s a scenario in which that could happen? Or is happening? Noah Smith, for one, thinks that LLMs systems could have a moderating effect on our current toxic public discourse. He says: “Being human, experts are often biased, partisan, and simply annoying, and when they seek to ‘educate’ the public, it can be perceived – and is sometimes intended – as condescending and rude. In contrast, LLMs deliver expert opinion without such status threats.”

Joe: Political discourse will always be undermined by the fact that politicians know it is far easier to manipulate people through fear, uncertainty and doubt than to ask them to think about the complexity that real issues require. Noah Smith appears to believe AI will encourage humans to be more thoughtful, but I suspect it will only reinforce the entrenched biases that AI has learned about specific individuals in order to influence their decisions.

This is remarkably easy to do – the 2016 election in the United States is a case in point. Facebook sold the personal data of millions of Americans in swing states to Cambridge Analytica for the purposes of micro-targeting propaganda to them on the platform. This required a small army of very expensive consultants to achieve, but it worked and got you-know-who elected. Now imagine how much easier and cheaper it would be using AI systems to effectively do the same thing for every single election going forward, from local to provincial or state to federal elections. Very sad emoji face (that’s Joe, not AI talking –BD).

BD: What are the limits of when AI can and when it shouldn’t be applied in creative endeavour. I’m thinking about the illustrations we used (and you generated) for the two pieces of yours in Life Sentences. As long as a graphic or video is identified as AI, it’s okay to use?

Joe: With respect to the illustrations used for the Life Sentences pieces, I realize that the cover art is secondary to the writing – it’s a “nice-to-have” that helps generate interest in the story. As for the visual artist who makes a living creating art, the visual product is the point. If I could afford to pay an artist for the “nice-to-have,” then using AI to do that means they’ve lost some work – that’s bad.

Now, just like spoken language, visual language can be generated at a fraction of the cost, in time and money. If it’s identified as AI, I think it’s fair use, but I have to admit, I don’t feel good about it.

BD: A recent article in The Guardian said one of the harms of AIs is a tendency among some people to get “hooked” on them because of the sophisticated conversations that LLM’s elicit. They end up making bad decisions, losing money or families, going psychotic in extreme cases.

Joe: The folks that let the machine control them are (hopefully) a very small minority who are prone to the dangers replete in our world – if it wasn’t the LLM, it would be the home shopping network, or the latest miracle supplement that’s only $49.99 a month. The fact that it happens, however, does point to the current shortcomings of the sycophancy built into AI chat systems, that is their tendency to reinforce the belief systems of their users. I believe that LLMs can uniquely exploit the “language bug” in the average person’s brain because it’s so interactive and self-confirming. That’s really problematic considering its use for targeted political messaging, surveillance capitalism, and subtly nudging people en masse. I’ve caught myself having a tech-support conversation on the phone for 30 seconds or so before realizing it was an automated AI assistant on the other end. That pissed me off. If you’re going to use AI, fine, but tell people it’s being used up front and don’t try to fly under the radar hoping people won’t notice, because most won’t, and that’s evil.

BD: What about the idea of using AI systems for therapy or as companions?

Joe: With the caveat of human oversight and good guardrails, I think AI companions can be an amazing resource. Imagine you’re sitting in a lecture hall full of super smart people listening to the world’s expert on any subject. Do you feel comfortable asking a “stupid” question in front of the entire room? Most people would not. Now imagine having an infinitely patient tutor willing to explain things to you from many different perspectives, all on your timeline – that’s AI doing good.

I’d say the same for AI therapy, with even more emphasis on human oversight and guardrails. I suppose the danger here, as in most cases involving AI, is yet another justification for not investing in better existing support (for poverty, mental health, and social programs) and throwing AI at it because it’s “cheaper at scale.”

Joe Neuhaus is a Montreal-based IT consultant specializing in enterprise software systems.

A most interesting article. I’ve used AI often but think it’s only as good as the information it’s been fed. To me AI is a useful tool. It’s not going away so we need to use it to our advantage and be aware of the pitfalls.

To Joe’s point: Every time AI is used in whatever capacity, it should be identified as such.